Hadoop 自定义序列化编程_1.package tem_com; 2.import java.io.ioexception; 3-程序员宅基地

package com.cakin.hadoop.mr;

import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException;

import org.apache.hadoop.io.WritableComparable;

public class UserWritable implements WritableComparable<UserWritable> {

private Integer id;

private Integer income;

private Integer expenses;

private Integer sum;

public void write(DataOutput out) throws IOException {

// TODO Auto-generated method stub

out.writeInt(id);

out.writeInt(income);

out.writeInt(expenses);

out.writeInt(sum);

}

public void readFields(DataInput in) throws IOException {

// TODO Auto-generated method stub

this.id=in.readInt();

this.income=in.readInt();

this.expenses=in.readInt();

this.sum=in.readInt();

}

public Integer getId() {

return id;

}

public UserWritable setId(Integer id) {

this.id = id;

return this;

}

public Integer getIncome() {

return income;

}

public UserWritable setIncome(Integer income) {

this.income = income;

return this;

}

public Integer getExpenses() {

return expenses;

}

public UserWritable setExpenses(Integer expenses) {

this.expenses = expenses;

return this;

}

public Integer getSum() {

return sum;

}

public UserWritable setSum(Integer sum) {

this.sum = sum;

return this;

}

public int compareTo(UserWritable o) {

// TODO Auto-generated method stub

return this.id>o.getId()?1:-1;

}

@Override

public String toString() {

return id + "\t"+income+"\t"+expenses+"\t"+sum;

}

}package com.cakin.hadoop.mr;

import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.mapreduce.Reducer;

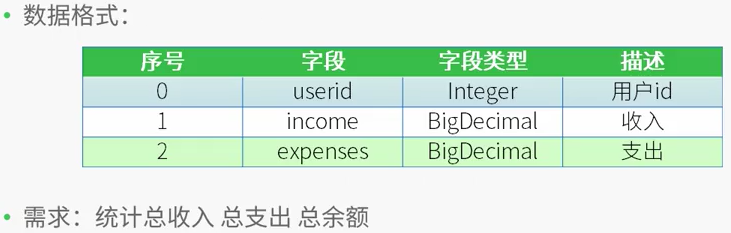

/*

* 测试数据

* 用户id 收入 支出

* 1 1000 0

* 2 500 300

* 1 2000 1000

* 2 500 200

*

* 需求:

* 用户id 总收入 总支出 总的余额

* 1 3000 1000 2000

* 2 1000 500 500

* */

public class CountMapReduce {

public static class CountMapper extends Mapper<LongWritable,Text,IntWritable,UserWritable>

{

private UserWritable userWritable =new UserWritable();

private IntWritable id =new IntWritable();

@Override

protected void map(LongWritable key,Text value,

Mapper<LongWritable,Text,IntWritable,UserWritable>.Context context) throws IOException, InterruptedException{

String line = value.toString();

String[] words = line.split("\t");

if(words.length ==3)

{

userWritable.setId(Integer.parseInt(words[0]))

.setIncome(Integer.parseInt(words[1]))

.setExpenses(Integer.parseInt(words[2]))

.setSum(Integer.parseInt(words[1])-Integer.parseInt(words[2]));

id.set(Integer.parseInt(words[0]));

}

context.write(id, userWritable);

}

}

public static class CountReducer extends Reducer<IntWritable,UserWritable,UserWritable,NullWritable>

{

/*

* 输入数据

* <1,{[1,1000,0,1000],[1,2000,1000,1000]}>

* <2,[2,500,300,200],[2,500,200,300]>

*

* */

private UserWritable userWritable = new UserWritable();

private NullWritable n = NullWritable.get();

protected void reduce(IntWritable key,Iterable<UserWritable> values,

Reducer<IntWritable,UserWritable,UserWritable,NullWritable>.Context context) throws IOException, InterruptedException{

Integer income=0;

Integer expenses = 0;

Integer sum =0;

for(UserWritable u:values)

{

income += u.getIncome();

expenses+=u.getExpenses();

}

sum = income - expenses;

userWritable.setId(key.get())

.setIncome(income)

.setExpenses(expenses)

.setSum(sum);

context.write(userWritable, n);

}

}

public static void main(String[] args) throws IllegalArgumentException, IOException, ClassNotFoundException, InterruptedException {

Configuration conf=new Configuration();

/*

* 集群中节点都有配置文件

conf.set("mapreduce.framework.name.", "yarn");

conf.set("yarn.resourcemanager.hostname", "mini1");

*/

Job job=Job.getInstance(conf,"countMR");

//jar包在哪里,现在在客户端,传递参数

//任意运行,类加载器知道这个类的路径,就可以知道jar包所在的本地路径

job.setJarByClass(CountMapReduce.class);

//指定本业务job要使用的mapper/Reducer业务类

job.setMapperClass(CountMapper.class);

job.setReducerClass(CountReducer.class);

//指定mapper输出数据的kv类型

job.setMapOutputKeyClass(IntWritable.class);

job.setMapOutputValueClass(UserWritable.class);

//指定最终输出的数据kv类型

job.setOutputKeyClass(UserWritable.class);

job.setOutputKeyClass(NullWritable.class);

//指定job的输入原始文件所在目录

FileInputFormat.setInputPaths(job, new Path(args[0]));

//指定job的输出结果所在目录

FileOutputFormat.setOutputPath(job, new Path(args[1]));

//将job中配置的相关参数及job所用的java类在的jar包,提交给yarn去运行

//提交之后,此时客户端代码就执行完毕,退出

//job.submit();

//等集群返回结果在退出

boolean res=job.waitForCompletion(true);

System.exit(res?0:1);

//类似于shell中的$?

}

}[root@centos hadoop-2.7.4]# bin/hdfs dfs -cat /input/data

1 1000 0

2 500 300

1 2000 1000

2 500 200[root@centos hadoop-2.7.4]# bin/yarn jar /root/jar/mapreduce.jar /input/data /output3

17/12/20 21:24:45 INFO client.RMProxy: Connecting to ResourceManager at /0.0.0.0:8032

17/12/20 21:24:46 WARN mapreduce.JobResourceUploader: Hadoop command-line option parsing not performed. Implement the Tool interface and execute your application with ToolRunner to remedy this.

17/12/20 21:24:47 INFO input.FileInputFormat: Total input paths to process : 1

17/12/20 21:24:47 INFO mapreduce.JobSubmitter: number of splits:1

17/12/20 21:24:47 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1513775596077_0001

17/12/20 21:24:49 INFO impl.YarnClientImpl: Submitted application application_1513775596077_0001

17/12/20 21:24:49 INFO mapreduce.Job: The url to track the job: http://centos:8088/proxy/application_1513775596077_0001/

17/12/20 21:24:49 INFO mapreduce.Job: Running job: job_1513775596077_0001

17/12/20 21:25:13 INFO mapreduce.Job: Job job_1513775596077_0001 running in uber mode : false

17/12/20 21:25:13 INFO mapreduce.Job: map 0% reduce 0%

17/12/20 21:25:38 INFO mapreduce.Job: map 100% reduce 0%

17/12/20 21:25:54 INFO mapreduce.Job: map 100% reduce 100%

17/12/20 21:25:56 INFO mapreduce.Job: Job job_1513775596077_0001 completed successfully

17/12/20 21:25:57 INFO mapreduce.Job: Counters: 49

File System Counters

FILE: Number of bytes read=94

FILE: Number of bytes written=241391

FILE: Number of read operations=0

FILE: Number of large read operations=0

FILE: Number of write operations=0

HDFS: Number of bytes read=135

HDFS: Number of bytes written=32

HDFS: Number of read operations=6

HDFS: Number of large read operations=0

HDFS: Number of write operations=2

Job Counters

Launched map tasks=1

Launched reduce tasks=1

Data-local map tasks=1

Total time spent by all maps in occupied slots (ms)=23672

Total time spent by all reduces in occupied slots (ms)=11815

Total time spent by all map tasks (ms)=23672

Total time spent by all reduce tasks (ms)=11815

Total vcore-milliseconds taken by all map tasks=23672

Total vcore-milliseconds taken by all reduce tasks=11815

Total megabyte-milliseconds taken by all map tasks=24240128

Total megabyte-milliseconds taken by all reduce tasks=12098560

Map-Reduce Framework

Map input records=4

Map output records=4

Map output bytes=80

Map output materialized bytes=94

Input split bytes=94

Combine input records=0

Combine output records=0

Reduce input groups=2

Reduce shuffle bytes=94

Reduce input records=4

Reduce output records=2

Spilled Records=8

Shuffled Maps =1

Failed Shuffles=0

Merged Map outputs=1

GC time elapsed (ms)=157

CPU time spent (ms)=1090

Physical memory (bytes) snapshot=275660800

Virtual memory (bytes) snapshot=4160692224

Total committed heap usage (bytes)=139264000

Shuffle Errors

BAD_ID=0

CONNECTION=0

IO_ERROR=0

WRONG_LENGTH=0

WRONG_MAP=0

WRONG_REDUCE=0

File Input Format Counters

Bytes Read=41

File Output Format Counters

Bytes Written=32[root@centos hadoop-2.7.4]# bin/hdfs dfs -cat /output3/part-r-00000

1 3000 1000 2000

2 1000 500 500智能推荐

EasyDarwin开源流媒体云平台之EasyRMS录播服务器功能设计_开源录播系统-程序员宅基地

文章浏览阅读3.6k次。需求背景EasyDarwin开发团队维护EasyDarwin开源流媒体服务器也已经很多年了,之前也陆陆续续尝试过很多种服务端录像的方案,有:在EasyDarwin中直接解析收到的RTP包,重新组包录像;也有:在EasyDarwin中新增一个RecordModule,再以RTSPClient的方式请求127.0.0.1自己的直播流录像,但这些始终都没有成气候;我们的想法是能够让整套EasyDarwin_开源录播系统

oracle Plsql 执行update或者delete时卡死问题解决办法_oracle delete update 锁表问题-程序员宅基地

文章浏览阅读1.1w次。今天碰到一个执行语句等了半天没有执行:delete table XXX where ......,但是在select 的时候没问题。后来发现是在执行select * from XXX for update 的时候没有commit,oracle将该记录锁住了。可以通过以下办法解决: 先查询锁定记录 Sql代码 SELECT s.sid, s.seri_oracle delete update 锁表问题

Xcode Undefined symbols 错误_xcode undefined symbols:-程序员宅基地

文章浏览阅读3.4k次。报错信息error:Undefined symbol: typeinfo for sdk::IConfigUndefined symbol: vtable for sdk::IConfig具体信息:Undefined symbols for architecture x86_64: "typeinfo for sdk::IConfig", referenced from: typeinfo for sdk::ConfigImpl in sdk.a(config_impl.o) _xcode undefined symbols:

项目05(Mysql升级07Mysql5.7.32升级到Mysql8.0.22)_mysql8.0.26 升级32-程序员宅基地

文章浏览阅读249次。背景《承接上文,项目05(Mysql升级06Mysql5.6.51升级到Mysql5.7.32)》,写在前面需要(考虑)检查和测试的层面很多,不限于以下内容。参考文档https://dev.mysql.com/doc/refman/8.0/en/upgrade-prerequisites.htmllink推荐阅读以上链接,因为对应以下问题,有详细的建议。官方文档:不得存在以下问题:0.不得有使用过时数据类型或功能的表。不支持就地升级到MySQL 8.0,如果表包含在预5.6.4格_mysql8.0.26 升级32

高通编译8155源码环境搭建_高通8155 qnx 源码-程序员宅基地

文章浏览阅读3.7k次。一.安装基本环境工具:1.安装git工具sudo apt install wget g++ git2.检查并安装java等环境工具2.1、执行下面安装命令#!/bin/bashsudoapt-get-yinstall--upgraderarunrarsudoapt-get-yinstall--upgradepython-pippython3-pip#aliyunsudoapt-get-yinstall--upgradeopenjdk..._高通8155 qnx 源码

firebase 与谷歌_Firebase的好与不好-程序员宅基地

文章浏览阅读461次。firebase 与谷歌 大多数开发人员都听说过Google的Firebase产品。 这就是Google所说的“ 移动平台,可帮助您快速开发高质量的应用程序并发展业务。 ”。 它基本上是大多数开发人员在构建应用程序时所需的一组工具。 在本文中,我将介绍这些工具,并指出您选择使用Firebase时需要了解的所有内容。 在开始之前,我需要说的是,我不会详细介绍Firebase提供的所有工具。 我..._firsebase 与 google

随便推点

k8s挂载目录_kubernetes(k8s)的pod使用统一的配置文件configmap挂载-程序员宅基地

文章浏览阅读1.2k次。在容器化应用中,每个环境都要独立的打一个镜像再给镜像一个特有的tag,这很麻烦,这就要用到k8s原生的配置中心configMap就是用解决这个问题的。使用configMap部署应用。这里使用nginx来做示例,简单粗暴。直接用vim常见nginx的配置文件,用命令导入进去kubectl create cm nginx.conf --from-file=/home/nginx.conf然后查看kub..._pod mount目录会自动创建吗

java计算机毕业设计springcloud+vue基于微服务的分布式新生报到系统_关于spring cloud的参考文献有啥-程序员宅基地

文章浏览阅读169次。随着互联网技术的发发展,计算机技术广泛应用在人们的生活中,逐渐成为日常工作、生活不可或缺的工具,高校各种管理系统层出不穷。高校作为学习知识和技术的高等学府,信息技术更加的成熟,为新生报到管理开发必要的系统,能够有效的提升管理效率。一直以来,新生报到一直没有进行系统化的管理,学生无法准确查询学院信息,高校也无法记录新生报名情况,由此提出开发基于微服务的分布式新生报到系统,管理报名信息,学生可以在线查询报名状态,节省时间,提高效率。_关于spring cloud的参考文献有啥

VB.net学习笔记(十五)继承与多接口练习_vb.net 继承多个接口-程序员宅基地

文章浏览阅读3.2k次。Public MustInherit Class Contact '只能作基类且不能实例化 Private mID As Guid = Guid.NewGuid Private mName As String Public Property ID() As Guid Get Return mID End Get_vb.net 继承多个接口

【Nexus3】使用-Nexus3批量上传jar包 artifact upload_nexus3 批量上传jar包 java代码-程序员宅基地

文章浏览阅读1.7k次。1.美图# 2.概述因为要上传我的所有仓库的包,希望nexus中已有的包,我不覆盖,没有的添加。所以想批量上传jar。3.方案1-脚本批量上传PS:nexus3.x版本只能通过脚本上传3.1 批量放入jar在mac目录下,新建一个文件夹repo,批量放入我们需要的本地库文件夹,并对文件夹授权(base) lcc@lcc nexus-3.22.0-02$ mkdir repo2..._nexus3 批量上传jar包 java代码

关于去隔行的一些概念_mipi去隔行-程序员宅基地

文章浏览阅读6.6k次,点赞6次,收藏30次。本文转自http://blog.csdn.net/charleslei/article/details/486519531、什么是场在介绍Deinterlacer去隔行处理的方法之前,我们有必要提一下关于交错场和去隔行处理的基本知识。那么什么是场呢,场存在于隔行扫描记录的视频中,隔行扫描视频的每帧画面均包含两个场,每一个场又分别含有该帧画面的奇数行扫描线或偶数行扫描线信息,_mipi去隔行

ABAP自定义Search help_abap 自定义 search help-程序员宅基地

文章浏览阅读1.7k次。DATA L_ENDDA TYPE SY-DATUM. IF P_DATE IS INITIAL. CONCATENATE SY-DATUM(4) '1231' INTO L_ENDDA. ELSE. CONCATENATE P_DATE(4) '1231' INTO L_ENDDA. ENDIF. DATA: LV_RESET(1) TY_abap 自定义 search help